這次僅針對CRE data的模型變數選擇,主要以下面python的forward backward selection的方式進行挑選,主要方式為:將所有資料的百分之六十切出,進行變數篩選,並使用loocv的方式比較不同變數模型的準確度差異。

讀取與切分資料

import pandas as pd

import numpy as np

df = pd.read_csv('C:/Users/User/OneDrive - student.nsysu.edu.tw/Educations/NSYSU/fu_chung/bacterial/123.csv')

from sklearn.model_selection import train_test_split

import random

cre = df[df['CRE'].isin([1])].iloc[:,:]

cre['CRE'] = 1

normal = df[df['CRE'].isin([0])].iloc[:,:]

normal['CRE'] = 0

random.seed(3)

train_nor, test_nor = train_test_split(normal, test_size = 0.6)

train_cre, test_cre = train_test_split(cre, test_size = 0.6)

data_train = pd.concat([train_nor,train_cre], axis=0)

data_test = pd.concat([test_nor,test_cre], axis=0)

f_x = train_cre.iloc[:,:]定義 forward backward selection function

from sklearn.datasets import load_boston

import pandas as pd

import numpy as np

from sklearn.linear_model import LogisticRegression

import statsmodels.api as sm

def stepwise_selection(X, y,

initial_list=[],

threshold_in=0.01,

threshold_out = 0.05,

verbose=True):

""" Perform a forward-backward feature selection

based on p-value from statsmodels.api.OLS

Arguments:

X - pandas.DataFrame with candidate features

y - list-like with the target

initial_list - list of features to start with (column names of X)

threshold_in - include a feature if its p-value < threshold_in

threshold_out - exclude a feature if its p-value > threshold_out

verbose - whether to print the sequence of inclusions and exclusions

Returns: list of selected features

Always set threshold_in < threshold_out to avoid infinite looping.

See https://en.wikipedia.org/wiki/Stepwise_regression for the details

"""

included = list(initial_list)

while True:

changed=False

# forward step

excluded = list(set(X.columns)-set(included))

new_pval = pd.Series(index=excluded)

for new_column in excluded:

model = sm.OLS(y, sm.add_constant(pd.DataFrame(X[included+[new_column]]))).fit()

new_pval[new_column] = model.pvalues[new_column]

best_pval = new_pval.min()

if best_pval < threshold_in:

best_feature = new_pval.argmin()

included.append(best_feature)

changed=True

if verbose:

print('Add {:30} with p-value {:.6}'.format(best_feature, best_pval))

# backward step

model = sm.OLS(y, sm.add_constant(pd.DataFrame(X[included]))).fit()

# use all coefs except intercept

pvalues = model.pvalues.iloc[1:]

worst_pval = pvalues.max() # null if pvalues is empty

if worst_pval > threshold_out:

changed=True

worst_feature = pvalues.argmax()

included.remove(worst_feature)

if verbose:

print('Drop {:30} with p-value {:.6}'.format(worst_feature, worst_pval))

if not changed:

break

return included挑選100次變數組合並輸出結果

f_list = {}

for i in range(100):

#m1 ,m2 = train_test_split(df, test_size = 0.6)

import time

tStart = time.time()#計時開始

train_nor, test_nor = train_test_split(normal, test_size = 0.6)

data_train = pd.concat([train_nor,train_cre], axis=0)

X = data_train.iloc[:,0:1471]

y = data_train.iloc[:,1471:1472]

result = stepwise_selection(X, y)

a = i

cname=str(a)

f_list.setdefault(cname,result)

#end of 模擬要測量的function

tEnd = time.time()#計時結束

#列印結果

print ("It cost %f sec" % (tEnd - tStart))#會自動做近位

print (tEnd - tStart)#原型長這樣Add V1201 with p-value 8.84384e-08

Add V1098 with p-value 2.85678e-06

Add V483 with p-value 5.06239e-05

Add V12 with p-value 0.00063702

Add V503 with p-value 0.00201273

Add V936 with p-value 0.0010971

Add V130 with p-value 0.0010447

Add V131 with p-value 1.43096e-07

Add V589 with p-value 0.0013715

Add V47 with p-value 0.00011529

Add V1225 with p-value 0.000221064

Add V220 with p-value 3.62875e-05

Add V354 with p-value 8.08712e-05

Add V50 with p-value 0.00585102

Add V372 with p-value 0.00478135

Add V141 with p-value 0.0055529

Add V533 with p-value 0.00270015

Add V324 with p-value 0.000643428

Add V875 with p-value 0.000698599

Add V509 with p-value 0.00418121

Add V197 with p-value 0.00646997

Add V608 with p-value 0.00819923

It cost 141.595159 sec

141.59515857696533c = pd.DataFrame(dict([(k, pd.Series(v

)) for k, v in f_list.items()]))

c3 = c

c3| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | … | 90 | 91 | 92 | 93 | 94 | 95 | 96 | 97 | 98 | 99 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | V1201 | V1201 | V1201 | V1201 | V1139 | V1201 | V1201 | V1201 | V1201 | V1201 | … | V1201 | V1201 | V1201 | V1201 | V1201 | V1201 | V1201 | V1201 | V1201 | V1201 |

| 1 | V1098 | V1098 | V1098 | V503 | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 | … | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 | V1098 |

| 2 | V483 | V109 | V483 | V591 | V512 | V483 | V229 | V229 | V1221 | V483 | … | V1058 | V483 | V109 | V483 | V1019 | V109 | V1019 | V109 | V109 | V1019 |

| 3 | V12 | V216 | V503 | V61 | V5 | V936 | V130 | V130 | V188 | V12 | … | V358 | V484 | V503 | V12 | V188 | V358 | V188 | V304 | V304 | V188 |

| 4 | V503 | V694 | V936 | V376 | V1072 | V148 | V456 | V456 | V247 | V936 | … | V130 | V503 | V304 | V201 | V936 | V217 | V1386 | V503 | V447 | V694 |

| 5 | V936 | V42 | V109 | V270 | V133 | V456 | V936 | V936 | V12 | V503 | … | V131 | V717 | V945 | V1352 | V39 | V887 | V336 | V1084 | V62 | V960 |

| 6 | V130 | V1438 | V185 | V1154 | V523 | V995 | V382 | V382 | V1165 | V130 | … | V614 | V945 | V1086 | V217 | V604 | V694 | V931 | V131 | V875 | V399 |

| 7 | V131 | V970 | V158 | V323 | V1 | V993 | V491 | V191 | V1068 | V131 | … | V247 | V943 | V48 | V8 | V548 | V399 | V135 | V70 | V94 | V1095 |

| 8 | V589 | V887 | V96 | V12 | V995 | V89 | V241 | V1134 | V150 | V589 | … | V6 | V708 | V59 | V269 | V9 | V1424 | V503 | V16 | V997 | V1424 |

| 9 | V47 | V600 | NaN | V455 | V1062 | V696 | V31 | V505 | V473 | V47 | … | V936 | V699 | V139 | V11 | V571 | V600 | V273 | V338 | V694 | V859 |

| 10 | V1225 | V532 | NaN | V197 | V645 | V912 | V820 | V1263 | V1142 | V1225 | … | V456 | V670 | V127 | V938 | V557 | V859 | V178 | V223 | V1112 | V887 |

| 11 | V220 | V399 | NaN | V63 | V665 | V651 | V487 | V5 | V508 | V220 | … | V1245 | V912 | V852 | V475 | V868 | V1095 | NaN | V321 | V1447 | V336 |

| 12 | V354 | V886 | NaN | V296 | V112 | V705 | V824 | V588 | V819 | V580 | … | V619 | V346 | V235 | V341 | V665 | V665 | NaN | V438 | V112 | V931 |

| 13 | V50 | V226 | NaN | V1062 | V232 | V699 | V931 | V234 | V131 | V509 | … | V955 | V705 | V611 | V573 | V914 | V394 | NaN | V138 | V665 | V226 |

| 14 | V372 | V386 | NaN | V809 | V914 | V670 | V1054 | V813 | V541 | V334 | … | V53 | V651 | V480 | V1053 | V112 | V915 | NaN | V583 | V668 | V92 |

| 15 | V141 | V1013 | NaN | V1110 | V1112 | V708 | V388 | NaN | V1166 | V952 | … | V223 | V1439 | V613 | V450 | V668 | V645 | NaN | V249 | V645 | V447 |

| 16 | V533 | V366 | NaN | V389 | V668 | V346 | V1244 | NaN | V6 | V451 | … | V515 | V641 | V33 | V129 | V645 | V1447 | NaN | V389 | V951 | V99 |

| 17 | V324 | V53 | NaN | V406 | V958 | V641 | V298 | NaN | V122 | V148 | … | V824 | V696 | V723 | V852 | V1447 | V232 | NaN | V350 | V859 | V1183 |

| 18 | V875 | V456 | NaN | V508 | V8 | V1381 | V1067 | NaN | V503 | NaN | … | V507 | V347 | V1307 | V750 | V519 | V1112 | NaN | V92 | V399 | V321 |

| 19 | V509 | V205 | NaN | V932 | V985 | V717 | V992 | NaN | NaN | NaN | … | V171 | V187 | V318 | V1120 | V1368 | V112 | NaN | V99 | V190 | V851 |

| 20 | V197 | V163 | NaN | NaN | NaN | V326 | V62 | NaN | NaN | NaN | … | V732 | V14 | V122 | V179 | V878 | V668 | NaN | V744 | V993 | V1060 |

| 21 | V608 | V613 | NaN | NaN | NaN | V241 | V851 | NaN | NaN | NaN | … | V285 | V598 | V555 | V130 | V658 | V875 | NaN | NaN | V943 | V271 |

| 22 | NaN | V1159 | NaN | NaN | NaN | V499 | V224 | NaN | NaN | NaN | … | V1223 | V604 | V468 | V586 | V1305 | V46 | NaN | NaN | V113 | V296 |

| 23 | NaN | V200 | NaN | NaN | NaN | V205 | V44 | NaN | NaN | NaN | … | V1031 | V566 | V388 | V1024 | V405 | V197 | NaN | NaN | V180 | V217 |

| 24 | NaN | V1022 | NaN | NaN | NaN | V852 | V358 | NaN | NaN | NaN | … | V568 | V1133 | V1019 | V1158 | V145 | V9 | NaN | NaN | V945 | V102 |

| 25 | NaN | V108 | NaN | NaN | NaN | V934 | NaN | NaN | NaN | NaN | … | V174 | V323 | V143 | V1133 | V129 | V22 | NaN | NaN | V1018 | V1345 |

| 26 | NaN | V1047 | NaN | NaN | NaN | V419 | NaN | NaN | NaN | NaN | … | V446 | V610 | V544 | V57 | V994 | V1026 | NaN | NaN | V853 | NaN |

| 27 | NaN | V203 | NaN | NaN | NaN | V235 | NaN | NaN | NaN | NaN | … | V1132 | V10 | V1221 | V1029 | V493 | NaN | NaN | NaN | V510 | NaN |

| 28 | NaN | V1051 | NaN | NaN | NaN | V623 | NaN | NaN | NaN | NaN | … | V1065 | V469 | V328 | V602 | V30 | NaN | NaN | NaN | V311 | NaN |

| 29 | NaN | V331 | NaN | NaN | NaN | V910 | NaN | NaN | NaN | NaN | … | V848 | V318 | V982 | NaN | NaN | NaN | NaN | NaN | V835 | NaN |

| … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … | … |

| 31 | NaN | V1194 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | V1232 | NaN | V617 | NaN | NaN | NaN | NaN | NaN | V485 | NaN |

| 32 | NaN | V1192 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | V23 | NaN | V816 | NaN | NaN | NaN | NaN | NaN | V502 | NaN |

| 33 | NaN | V1043 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | V822 | NaN | V837 | NaN | NaN | NaN | NaN | NaN | V51 | NaN |

| 34 | NaN | V955 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | V559 | NaN | V919 | NaN | NaN | NaN | NaN | NaN | V388 | NaN |

| 35 | NaN | V915 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | V568 | NaN |

| 36 | NaN | V1447 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 37 | NaN | V668 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 38 | NaN | V914 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 39 | NaN | V645 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 40 | NaN | V665 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 41 | NaN | V875 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 42 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 43 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 44 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 45 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 46 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 47 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 48 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 49 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 50 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 51 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 52 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 53 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 54 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 55 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 56 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 57 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 58 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 59 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

| 60 | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | … | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN | NaN |

61 rows × 100 columns

c3.describe()| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | … | 90 | 91 | 92 | 93 | 94 | 95 | 96 | 97 | 98 | 99 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| count | 22 | 42 | 9 | 20 | 20 | 30 | 25 | 15 | 19 | 18 | … | 35 | 31 | 35 | 29 | 29 | 27 | 11 | 21 | 36 | 26 |

| unique | 22 | 42 | 9 | 20 | 20 | 30 | 25 | 15 | 19 | 18 | … | 35 | 31 | 35 | 29 | 29 | 27 | 11 | 21 | 36 | 26 |

| top | V608 | V875 | V158 | V1110 | V645 | V651 | V456 | V1263 | V1068 | V334 | … | V456 | V651 | V59 | V11 | V1305 | V217 | V1098 | V338 | V190 | V217 |

| freq | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | … | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 |

4 rows × 100 columns

result = np.array(c1.iloc[:,0:1])

resultarray([[‘V1201’], [‘V1098’], [‘V12’], [‘V892’], [‘V188’], [‘V205’], [‘V376’], [‘V187’], [‘V666’], [‘V1062’], [‘V1211’], [‘V692’], [‘V689’], [‘V1223’], [‘V48’], [‘V634’], [‘V668’], [‘V841’], [‘V665’], [‘V645’], [‘V490’], [‘V148’], [‘V936’], [‘V529’], [‘V472’], [‘V886’], [‘V391’], [‘V888’], [‘V1203’], [‘V667’], [‘V1457’], [‘V1155’], [‘V395’], [‘V646’], [‘V712’], [‘V931’], [‘V1340’], [‘V1341’], [‘V389’], [‘V398’]], dtype=object)

#Write the csv

c1.to_csv("c1.csv",index=False,sep=',')Models Building

# Read data

data_csv <- read.csv("C:\\Users\\User\\OneDrive - student.nsysu.edu.tw\\Educations\\NSYSU\\fu_chung\\bacterial - PCA\\20191202_1471_CRE_46-non-CRE_49_Intensity.csv")

# arrange

if (!require(tidyverse)) install.packages("tidyverse")## Loading required package: tidyverse## -- Attaching packages ------------------------------------------------------------------------------------------------ tidyverse 1.2.1 --## √ ggplot2 3.1.1 √ purrr 0.3.2

## √ tibble 2.1.1 √ dplyr 0.8.0.1

## √ tidyr 0.8.3 √ stringr 1.4.0

## √ readr 1.3.1 √ forcats 0.4.0## -- Conflicts --------------------------------------------------------------------------------------------------- tidyverse_conflicts() --

## x dplyr::filter() masks stats::filter()

## x dplyr::lag() masks stats::lag()library(tidyverse)

#sort data by p.value

data_csv <- arrange(data_csv,p.value)

#transpose data

name_protein <- data_csv[,1]

data <- as.data.frame(t(data_csv))

data <- data[-c(1:3),]

#data name

name_variable <- names(data)

data_name <- data.frame(name_variable,name_protein)

data_name <- as.data.frame(t(data_name))

data$CRE <- as.factor(c(rep(1,46),rep(0,49)))forward backward selection

library(raster)## Loading required package: sp##

## Attaching package: 'raster'## The following object is masked from 'package:dplyr':

##

## select## The following object is masked from 'package:tidyr':

##

## extractfeature_csv <- read.csv("C:\\Users\\User\\OneDrive - student.nsysu.edu.tw\\Documents\\Python\\GAN\\feature.csv")

#best = c("V993","V322","V864","V689","V598","V1156","V240","V395","V1255","V1218","V634","V529", "V869", "V410", "V521", "V32", "V1201", "V478", "V306", "V964", "V1122", "V485", "V690", "V947", "V677", "V1444", "V832", "V1", "V517", "V351", "V9", "V109", "V872", "V518", "V1239", "V270", "V695", "V147", "V524", "V679", "V320", "V356", "V232", "V687", "V112", "V983", "V146", "V345", "V520", "V198", "V59", "V408", "V110", "V250", "V1275", "V60", "V1253", "V459", "V522", "V889", "V403", "V269", "V87", "V530", "V839", "V399", "V861", "V242", "V823", "V58", "V627", "V84", "V321", "V50", "V483", "V475", "V1396", "V1411", "V1285", "V1093", "V1378", "V413", "V525", "V671", "V30", "V95", "V1199", "V767", "V809", "V1404", "V1401", "V113", "V1198", "V1405", "V1398", "V1209", "V1407", "V1352", "V271", "V528", "V805", "V1397", "V753", "V200", "V1400", "V1408", "V1394", "V593", "V1157", "V233", "V268", "V576", "V181", "V1395", "V820", "V1257", "V514", "V669", "V943", "V489", "V937", "V486", "V513", "V1143", "V966", "V980", "V1274", "V1403", "V343", "V686", "V653", "V1281", "V234", "V1279", "V523", "V870", "V959", "V1278", "V871", "V5", "V775", "V845", "V1211", "V1110", "V1273", "V995", "V1276", "V873", "V595", "V1280", "V1034", "V1228", "V1012", "V1226", "V1094", "V511", "V944", "V1068", "V1146", "V313", "V821", "V122", "V1227", "V386", "V771", "V551", "V538", "V1220", "V1179")

num = 1

best = as.character(feature_csv[1:(61 - length(which(feature_csv[,num] == ""))),num])

bestdf = data[,best]

bestdf$CRE <- as.factor(c(rep(1,46),rep(0,49)))loocv

#tuning rf

library(randomForest)## randomForest 4.6-14## Type rfNews() to see new features/changes/bug fixes.##

## Attaching package: 'randomForest'## The following object is masked from 'package:dplyr':

##

## combine## The following object is masked from 'package:ggplot2':

##

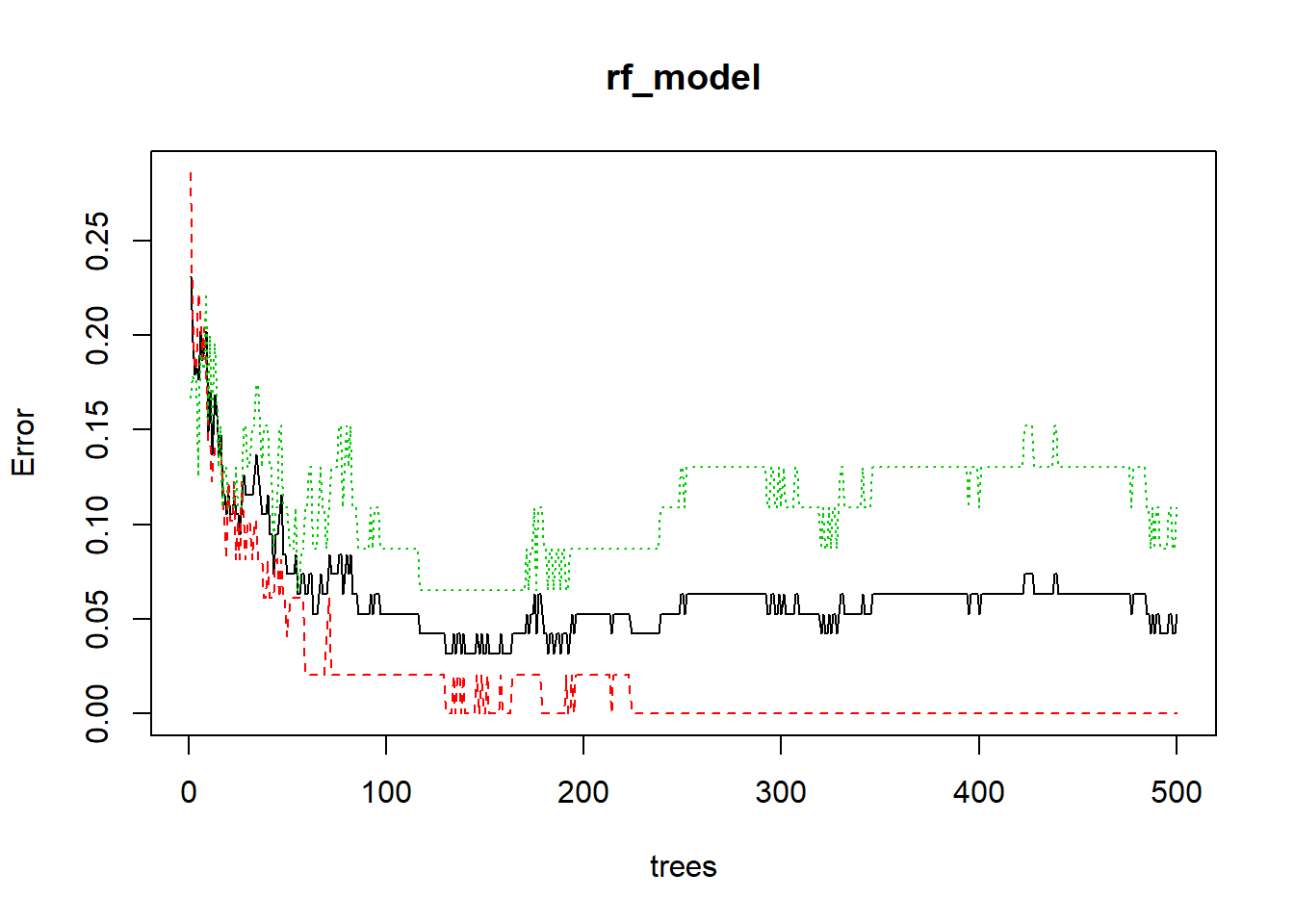

## marginrf_model = randomForest(CRE~.,

data=data,

ntree=500 # num of decision Tree

)

plot(rf_model)

which.min(rf_model$err.rate[,1])## [1] 130A = as.data.frame(rf_model$importance)

A$names <- row.names(A)

A = A[order(A$MeanDecreaseGini,decreasing = T),]

impo <- A[1:50,2]

A[c(1:50),]## MeanDecreaseGini names

## V994 1.4081240 V994

## V609 1.1766118 V609

## V1426 1.1419769 V1426

## V1428 1.0253725 V1428

## V1251 0.8610926 V1251

## V931 0.8480539 V931

## V205 0.8263031 V205

## V172 0.7950854 V172

## V1228 0.7435799 V1228

## V37 0.6530718 V37

## V288 0.6421747 V288

## V242 0.6185031 V242

## V935 0.6005225 V935

## V1232 0.5621623 V1232

## V264 0.5597400 V264

## V227 0.5562529 V227

## V1380 0.5561197 V1380

## V32 0.5553537 V32

## V1422 0.4995243 V1422

## V819 0.4982642 V819

## V473 0.4902241 V473

## V328 0.4609527 V328

## V1383 0.4406418 V1383

## V84 0.4260847 V84

## V830 0.3931476 V830

## V1 0.3855232 V1

## V131 0.3836959 V131

## V420 0.3690650 V420

## V1072 0.3669098 V1072

## V975 0.3294655 V975

## V834 0.3249310 V834

## V92 0.3201629 V92

## V216 0.3092701 V216

## V9 0.3068394 V9

## V306 0.2959827 V306

## V129 0.2722810 V129

## V181 0.2597386 V181

## V1225 0.2537217 V1225

## V218 0.2452925 V218

## V188 0.2417646 V188

## V313 0.2398082 V313

## V187 0.2382702 V187

## V389 0.2376730 V389

## V480 0.2355510 V480

## V831 0.2245495 V831

## V228 0.2171766 V228

## V542 0.2153996 V542

## V1073 0.2140407 V1073

## V179 0.2072366 V179

## V1061 0.2019502 V1061impodf = data[,impo]

impodf$CRE <- as.factor(c(rep(1,46),rep(0,49)))output the importance list

library(randomForest)

mean_loocv <- vector()

for(j in c(1:20)){

rf_loocv_accuracy <- vector()

for(i in c(1:95)){

train = bestdf[-i, ]

test = bestdf[i, ]

rf_model = randomForest(CRE~.,

data=train,

ntree=100 # num of decision Tree

)

test.pred = predict(rf_model, test)

#Accuracy

confus.matrix = table(real=test$CRE, predict=test.pred)

rf_loocv_accuracy[i]=sum(diag(confus.matrix))/sum(confus.matrix)

}

#LOOCV test accuracy

mean_loocv[j] = mean(rf_loocv_accuracy) # Accurary with LOOCV = 0.9157895

mean(rf_loocv_accuracy)

}

mean_loocv

write.csv(mean_loocv,"mean_loocv.csv")loocv rf for 100 times 60% fb features

result <- as.data.frame(matrix())

for(k in c(1:101)){

num = k

best = as.character(feature_csv[1:(61 - length(which(feature_csv[,num] == ""))),num])

bestdf = data[,best]

bestdf$CRE <- as.factor(c(rep(1,46),rep(0,49)))

library(randomForest)

mean_loocv <- vector()

for(j in c(1:20)){

rf_loocv_accuracy <- vector()

for(i in c(1:95)){

train = bestdf[-i, ]

test = bestdf[i, ]

rf_model = randomForest(CRE~.,

data=train,

ntree=100 # num of decision Tree

)

test.pred = predict(rf_model, test)

#Accuracy

confus.matrix = table(real=test$CRE, predict=test.pred)

rf_loocv_accuracy[i]=sum(diag(confus.matrix))/sum(confus.matrix)

}

#LOOCV test accuracy

mean_loocv[j] = mean(rf_loocv_accuracy) # Accurary with LOOCV = 0.9157895

mean(rf_loocv_accuracy)

}

result <- cbind(result, as.data.frame(mean_loocv))

}

write.csv(result,"result.csv")the final result

label <- read.csv("C:\\Users\\User\\OneDrive - student.nsysu.edu.tw\\Educations\\NSYSU\\fu_chung\\bacterial - PCA\\label.csv")

label## Names loop1 loop2 loop3 loop4 loop5

## 1 best 0.9473684 0.9578947 0.9263158 0.9157895 0.9263158

## 2 impoall_50 0.9473684 0.9789474 0.9578947 0.9473684 0.9368421

## 3 impoall_60 0.9473684 0.9473684 0.9578947 0.9684211 0.9684211

## 4 impoall_80 0.9684211 0.9684211 0.9684211 0.9578947 0.9578947

## 5 impoall_100 0.9578947 0.9473684 0.9578947 0.9684211 0.9684211

## 6 fb60_1 0.8105263 0.7578947 0.7789474 0.8210526 0.7684211

## 7 fb60_2 0.8315789 0.8315789 0.8105263 0.8000000 0.8000000

## 8 fb60_3 0.6947368 0.6736842 0.7157895 0.6947368 0.7157895

## 9 fb60_4 0.7894737 0.8105263 0.8105263 0.8210526 0.8105263

## 10 fb60_5 0.8421053 0.8631579 0.8421053 0.8526316 0.8526316

## 11 fb60_6 0.8000000 0.7894737 0.7684211 0.8000000 0.7578947

## 12 fb60_7 0.8947368 0.8105263 0.8736842 0.8315789 0.8736842

## 13 fb60_8 0.7684211 0.7684211 0.7894737 0.7894737 0.7578947

## 14 fb60_9 0.8210526 0.8315789 0.8421053 0.8421053 0.8631579

## 15 fb60_10 0.7684211 0.7894737 0.8105263 0.8105263 0.8210526

## 16 fb60_11 0.7894737 0.8000000 0.7789474 0.7894737 0.7894737

## 17 fb60_12 0.8421053 0.8526316 0.8421053 0.8526316 0.8526316

## 18 fb60_13 0.7894737 0.8000000 0.7894737 0.8210526 0.7789474

## 19 fb60_14 0.7052632 0.6947368 0.7052632 0.7263158 0.6631579

## 20 fb60_15 0.8526316 0.8736842 0.8210526 0.8000000 0.8105263

## 21 fb60_16 0.6947368 0.6736842 0.6631579 0.6947368 0.6736842

## 22 fb60_17 0.6105263 0.5789474 0.6210526 0.6210526 0.6210526

## 23 fb60_18 0.8631579 0.8105263 0.8315789 0.8315789 0.8210526

## 24 fb60_19 0.8315789 0.8526316 0.8315789 0.8315789 0.8210526

## 25 fb60_20 0.8947368 0.8842105 0.8947368 0.8842105 0.9052632

## 26 fb60_21 0.8105263 0.8105263 0.8105263 0.7789474 0.8000000

## 27 fb60_22 0.7578947 0.7368421 0.7263158 0.7368421 0.7263158

## 28 fb60_23 0.6526316 0.6526316 0.6631579 0.6736842 0.6736842

## 29 fb60_24 0.7473684 0.6947368 0.7263158 0.7473684 0.7052632

## 30 fb60_25 0.6947368 0.6842105 0.6947368 0.7052632 0.7157895

## 31 fb60_26 0.6947368 0.6421053 0.6421053 0.6526316 0.6736842

## 32 fb60_27 0.8315789 0.8421053 0.8526316 0.8736842 0.8421053

## 33 fb60_28 0.8947368 0.8631579 0.8842105 0.8842105 0.9052632

## 34 fb60_29 0.8736842 0.8631579 0.8631579 0.8842105 0.8631579

## 35 fb60_30 0.8315789 0.8210526 0.8105263 0.8315789 0.8210526

## 36 fb60_31 0.8631579 0.8526316 0.8842105 0.8526316 0.8842105

## 37 fb60_32 0.8105263 0.8421053 0.8421053 0.8105263 0.8526316

## 38 fb60_33 0.8000000 0.8000000 0.8421053 0.8000000 0.8000000

## 39 fb60_34 0.8421053 0.8421053 0.8526316 0.8210526 0.8421053

## 40 fb60_35 0.8210526 0.8105263 0.7684211 0.8105263 0.8210526

## 41 fb60_36 0.8631579 0.8421053 0.8631579 0.8315789 0.8526316

## 42 fb60_37 0.9052632 0.9052632 0.9263158 0.8842105 0.9368421

## 43 fb60_38 0.8315789 0.8526316 0.8315789 0.8421053 0.8315789

## 44 fb60_39 0.8736842 0.8842105 0.8736842 0.8947368 0.8842105

## 45 fb60_40 0.8105263 0.8210526 0.8000000 0.8210526 0.8210526

## 46 fb60_41 0.7578947 0.7368421 0.7684211 0.7368421 0.7684211

## 47 fb60_42 0.7368421 0.7368421 0.7263158 0.7157895 0.7368421

## 48 fb60_43 0.7473684 0.7789474 0.7684211 0.7473684 0.7684211

## 49 fb60_44 0.8315789 0.7789474 0.8000000 0.7894737 0.7684211

## 50 fb60_45 0.7368421 0.7684211 0.7578947 0.7578947 0.7789474

## 51 fb60_46 0.8105263 0.7894737 0.8000000 0.8210526 0.8105263

## 52 fb60_47 0.7263158 0.7894737 0.7263158 0.7684211 0.7368421

## 53 fb60_48 0.8315789 0.8526316 0.7789474 0.8315789 0.8315789

## 54 fb60_49 0.8105263 0.8105263 0.8315789 0.8210526 0.8105263

## 55 fb60_50 0.7578947 0.6947368 0.7157895 0.7368421 0.7263158

## 56 fb60_51 0.8105263 0.8105263 0.8315789 0.8210526 0.8210526

## 57 fb60_52 0.7789474 0.7578947 0.7473684 0.7473684 0.7368421

## 58 fb60_53 0.8526316 0.8210526 0.8526316 0.8526316 0.8526316

## 59 fb60_54 0.8210526 0.8000000 0.7894737 0.8210526 0.8105263

## 60 fb60_55 0.7368421 0.7789474 0.7368421 0.7789474 0.7789474

## 61 fb60_56 0.8210526 0.8421053 0.8210526 0.8210526 0.8315789

## 62 fb60_57 0.8421053 0.8105263 0.8315789 0.7894737 0.7578947

## 63 fb60_58 0.7894737 0.8105263 0.8105263 0.8000000 0.8210526

## 64 fb60_59 0.8736842 0.8631579 0.8631579 0.8842105 0.8736842

## 65 fb60_60 0.9052632 0.8842105 0.8947368 0.9052632 0.8736842

## 66 fb60_61 0.8526316 0.8315789 0.8421053 0.8315789 0.8315789

## 67 fb60_62 0.8000000 0.8105263 0.8000000 0.7789474 0.8421053

## 68 fb60_63 0.8315789 0.8526316 0.8631579 0.8315789 0.8631579

## 69 fb60_64 0.7578947 0.7368421 0.7473684 0.7157895 0.7578947

## 70 fb60_65 0.6947368 0.7473684 0.7157895 0.7157895 0.7052632

## 71 fb60_66 0.6842105 0.6842105 0.6947368 0.7052632 0.7052632

## 72 fb60_67 0.6842105 0.6842105 0.6842105 0.6947368 0.6842105

## 73 fb60_68 0.8105263 0.8210526 0.8000000 0.8421053 0.8526316

## 74 fb60_69 0.8315789 0.8526316 0.8421053 0.8421053 0.8526316

## 75 fb60_70 0.8631579 0.8631579 0.8526316 0.8947368 0.8421053

## 76 fb60_71 0.8105263 0.8105263 0.7894737 0.8000000 0.8000000

## 77 fb60_72 0.6736842 0.6842105 0.7157895 0.6736842 0.6947368

## 78 fb60_73 0.6842105 0.6947368 0.7052632 0.6947368 0.6631579

## 79 fb60_74 0.6631579 0.7052632 0.7052632 0.7263158 0.7473684

## 80 fb60_75 0.7473684 0.7578947 0.7473684 0.7789474 0.7368421

## 81 fb60_76 0.8947368 0.8947368 0.9052632 0.8842105 0.8947368

## 82 fb60_77 0.7894737 0.7578947 0.7894737 0.7473684 0.8000000

## 83 fb60_78 0.7368421 0.7578947 0.7368421 0.7578947 0.7473684

## 84 fb60_79 0.8105263 0.8736842 0.8842105 0.8526316 0.8526316

## 85 fb60_80 0.7684211 0.7263158 0.7263158 0.7684211 0.7578947

## 86 fb60_81 0.6631579 0.6210526 0.6736842 0.6842105 0.6526316

## 87 fb60_82 0.6947368 0.6631579 0.7052632 0.7052632 0.7157895

## 88 fb60_83 0.7368421 0.7578947 0.7263158 0.7368421 0.7789474

## 89 fb60_84 0.7473684 0.7789474 0.7368421 0.7578947 0.7368421

## 90 fb60_85 0.7894737 0.8000000 0.7789474 0.8000000 0.7894737

## 91 fb60_86 0.8631579 0.8631579 0.8526316 0.8526316 0.8631579

## 92 fb60_87 0.8000000 0.7789474 0.7894737 0.8000000 0.8210526

## 93 fb60_88 0.8210526 0.8210526 0.8210526 0.8105263 0.8210526

## 94 fb60_89 0.7263158 0.7684211 0.7578947 0.7473684 0.7789474

## 95 fb60_90 0.8421053 0.8105263 0.8421053 0.8105263 0.8526316

## 96 fb60_91 0.8842105 0.8842105 0.8947368 0.8421053 0.8631579

## 97 fb60_92 0.8210526 0.7894737 0.8210526 0.8210526 0.8000000

## 98 fb60_93 0.8526316 0.8421053 0.8105263 0.8315789 0.8421053

## 99 fb60_94 0.8947368 0.8526316 0.8947368 0.8842105 0.8736842

## 100 fb60_95 0.8947368 0.9052632 0.9052632 0.9052632 0.9052632

## 101 fb60_96 0.8315789 0.7789474 0.8000000 0.7894737 0.8105263

## 102 fb60_97 0.8421053 0.8315789 0.8315789 0.8315789 0.8315789

## 103 fb60_98 0.8736842 0.9052632 0.8842105 0.8947368 0.8842105

## 104 fb60_99 0.7894737 0.7894737 0.8000000 0.8210526 0.7894737

## 105 fb60_100 0.9157895 0.8842105 0.9052632 0.8736842 0.8526316

## 106 fb60_101 0.9263158 0.9157895 0.9263158 0.9263158 0.9157895

## loop6 loop7 loop8 loop9 loop10 loop11 loop12

## 1 0.9263158 0.9052632 0.9473684 0.9578947 0.9473684 0.9578947 0.9578947

## 2 0.9473684 0.9684211 0.9578947 0.9368421 0.9578947 0.9578947 0.9368421

## 3 0.9578947 0.9578947 0.9578947 0.9684211 0.9684211 0.9578947 0.9684211

## 4 0.9684211 0.9684211 0.9684211 0.9578947 0.9684211 0.9578947 0.9684211

## 5 0.9368421 0.9578947 0.9473684 0.9684211 0.9368421 0.9578947 0.9578947

## 6 0.8000000 0.8000000 0.7894737 0.7789474 0.7789474 0.8421053 0.8315789

## 7 0.8210526 0.8421053 0.7894737 0.8526316 0.8105263 0.7894737 0.8000000

## 8 0.7157895 0.6947368 0.6842105 0.6842105 0.6947368 0.7157895 0.6631579

## 9 0.8210526 0.8000000 0.8000000 0.7789474 0.7789474 0.8000000 0.8000000

## 10 0.8631579 0.8105263 0.8421053 0.8421053 0.8526316 0.8315789 0.8421053

## 11 0.7578947 0.7684211 0.8000000 0.7578947 0.7684211 0.8000000 0.7789474

## 12 0.8421053 0.8526316 0.8631579 0.8631579 0.8315789 0.8526316 0.8421053

## 13 0.7684211 0.7684211 0.8000000 0.7684211 0.7789474 0.8000000 0.7789474

## 14 0.8526316 0.8421053 0.8210526 0.8315789 0.8421053 0.8421053 0.8421053

## 15 0.8000000 0.7789474 0.7894737 0.8210526 0.8105263 0.8000000 0.8000000

## 16 0.8000000 0.8000000 0.7684211 0.7894737 0.8000000 0.8000000 0.7684211

## 17 0.8421053 0.8526316 0.8210526 0.8736842 0.8210526 0.8526316 0.8421053

## 18 0.7789474 0.8105263 0.8210526 0.8000000 0.7894737 0.7684211 0.8315789

## 19 0.6842105 0.6842105 0.6947368 0.6842105 0.6736842 0.7157895 0.6842105

## 20 0.8631579 0.8105263 0.8105263 0.8421053 0.8210526 0.8421053 0.8526316

## 21 0.6842105 0.6736842 0.6947368 0.6947368 0.6631579 0.6842105 0.6842105

## 22 0.6315789 0.6105263 0.6000000 0.5894737 0.6000000 0.5789474 0.6210526

## 23 0.8526316 0.8526316 0.8210526 0.8421053 0.7894737 0.8210526 0.8315789

## 24 0.8526316 0.8315789 0.8526316 0.8315789 0.8526316 0.8421053 0.8736842

## 25 0.9157895 0.8842105 0.8736842 0.8842105 0.8842105 0.9157895 0.8947368

## 26 0.8105263 0.8421053 0.8210526 0.7684211 0.8210526 0.8000000 0.8105263

## 27 0.7578947 0.7473684 0.7368421 0.7368421 0.7263158 0.7473684 0.7473684

## 28 0.6736842 0.6526316 0.6526316 0.6315789 0.6526316 0.6315789 0.6947368

## 29 0.7157895 0.7052632 0.7368421 0.7368421 0.7263158 0.7157895 0.7157895

## 30 0.6842105 0.7157895 0.7157895 0.6947368 0.7157895 0.6947368 0.7052632

## 31 0.6526316 0.6315789 0.7157895 0.6736842 0.6526316 0.6631579 0.7263158

## 32 0.8736842 0.8526316 0.8421053 0.8631579 0.8315789 0.8526316 0.8421053

## 33 0.8842105 0.8842105 0.8842105 0.8736842 0.8842105 0.8842105 0.8631579

## 34 0.8631579 0.8526316 0.8842105 0.8736842 0.8526316 0.8526316 0.8736842

## 35 0.7894737 0.8210526 0.8000000 0.8105263 0.8421053 0.8421053 0.8421053

## 36 0.8526316 0.8631579 0.8421053 0.8315789 0.8736842 0.8736842 0.8526316

## 37 0.8000000 0.8210526 0.8000000 0.8105263 0.8210526 0.8210526 0.8000000

## 38 0.8000000 0.8210526 0.8000000 0.7894737 0.8105263 0.8105263 0.8421053

## 39 0.8421053 0.8421053 0.8526316 0.8421053 0.8526316 0.8000000 0.8421053

## 40 0.7894737 0.8105263 0.8000000 0.8000000 0.8105263 0.8000000 0.7789474

## 41 0.8421053 0.8631579 0.8315789 0.8421053 0.8421053 0.8105263 0.8526316

## 42 0.9263158 0.9052632 0.9263158 0.9052632 0.9052632 0.9052632 0.9263158

## 43 0.8526316 0.8526316 0.8526316 0.8526316 0.8315789 0.8526316 0.8526316

## 44 0.8947368 0.8842105 0.8947368 0.8736842 0.8842105 0.8947368 0.8736842

## 45 0.8315789 0.8000000 0.8210526 0.8105263 0.8105263 0.8000000 0.8000000

## 46 0.7578947 0.7368421 0.7473684 0.7368421 0.7684211 0.7473684 0.7578947

## 47 0.7473684 0.7263158 0.6842105 0.7263158 0.6947368 0.7263158 0.7263158

## 48 0.7473684 0.7578947 0.7368421 0.7894737 0.7578947 0.7684211 0.7894737

## 49 0.8315789 0.7789474 0.7789474 0.7578947 0.8105263 0.8000000 0.8105263

## 50 0.7368421 0.7263158 0.7578947 0.7578947 0.7157895 0.7789474 0.7578947

## 51 0.8000000 0.7789474 0.8000000 0.8210526 0.8000000 0.8315789 0.8526316

## 52 0.7578947 0.7368421 0.7473684 0.7789474 0.7789474 0.7263158 0.7263158

## 53 0.8315789 0.8105263 0.8315789 0.8315789 0.8105263 0.8526316 0.8526316

## 54 0.8210526 0.8315789 0.8000000 0.8000000 0.8315789 0.8000000 0.8000000

## 55 0.7368421 0.7052632 0.6947368 0.7052632 0.7052632 0.6842105 0.6947368

## 56 0.8000000 0.8105263 0.8315789 0.8210526 0.8210526 0.8105263 0.8210526

## 57 0.7684211 0.7578947 0.7578947 0.7368421 0.7473684 0.7684211 0.7473684

## 58 0.8526316 0.8736842 0.8526316 0.8315789 0.8736842 0.8421053 0.8526316

## 59 0.8210526 0.7894737 0.8210526 0.8315789 0.7894737 0.7894737 0.8105263

## 60 0.7578947 0.7578947 0.7368421 0.7578947 0.7894737 0.7894737 0.7684211

## 61 0.8105263 0.8000000 0.8105263 0.8315789 0.8105263 0.8210526 0.8000000

## 62 0.8315789 0.8105263 0.8105263 0.7578947 0.8000000 0.8000000 0.7789474

## 63 0.8000000 0.8105263 0.7789474 0.8210526 0.8210526 0.8315789 0.8105263

## 64 0.8631579 0.8631579 0.8526316 0.8736842 0.8631579 0.8526316 0.8736842

## 65 0.9052632 0.9263158 0.8947368 0.8842105 0.8947368 0.9157895 0.9157895

## 66 0.8000000 0.8526316 0.8000000 0.8210526 0.8105263 0.8315789 0.8210526

## 67 0.8421053 0.8315789 0.8210526 0.8105263 0.8421053 0.8421053 0.8210526

## 68 0.8421053 0.8631579 0.8421053 0.8421053 0.8421053 0.8736842 0.8421053

## 69 0.7473684 0.7368421 0.7263158 0.7578947 0.7368421 0.7473684 0.7157895

## 70 0.7052632 0.7157895 0.7368421 0.6947368 0.6736842 0.7473684 0.7368421

## 71 0.7052632 0.7157895 0.7052632 0.7052632 0.6947368 0.7052632 0.7368421

## 72 0.6842105 0.6947368 0.6947368 0.6947368 0.6736842 0.6736842 0.6947368

## 73 0.8315789 0.8421053 0.8421053 0.8105263 0.8421053 0.8105263 0.8315789

## 74 0.8526316 0.8421053 0.8421053 0.8631579 0.8421053 0.8631579 0.8526316

## 75 0.8210526 0.8947368 0.8526316 0.8736842 0.8210526 0.8421053 0.8736842

## 76 0.8000000 0.7684211 0.7684211 0.7894737 0.7789474 0.7789474 0.7684211

## 77 0.7157895 0.7263158 0.6947368 0.7052632 0.7052632 0.6947368 0.6947368

## 78 0.6842105 0.6736842 0.6736842 0.6736842 0.6947368 0.7157895 0.6736842

## 79 0.6631579 0.7052632 0.6947368 0.6947368 0.7052632 0.6842105 0.6736842

## 80 0.7578947 0.7578947 0.7157895 0.7789474 0.7578947 0.7473684 0.7684211

## 81 0.8736842 0.8947368 0.8842105 0.8947368 0.8842105 0.9157895 0.8947368

## 82 0.7473684 0.7894737 0.8000000 0.8000000 0.8210526 0.7684211 0.8315789

## 83 0.7263158 0.7473684 0.7368421 0.7684211 0.7368421 0.7578947 0.7473684

## 84 0.8526316 0.8736842 0.8315789 0.8421053 0.8421053 0.8526316 0.8315789

## 85 0.7789474 0.7789474 0.7263158 0.7263158 0.7052632 0.7157895 0.7263158

## 86 0.6631579 0.6526316 0.6210526 0.6631579 0.6526316 0.6736842 0.6526316

## 87 0.6842105 0.7368421 0.7263158 0.7157895 0.7052632 0.7157895 0.6736842

## 88 0.7263158 0.7578947 0.7578947 0.7473684 0.7368421 0.7578947 0.7578947

## 89 0.7473684 0.7578947 0.7263158 0.7684211 0.7473684 0.7052632 0.7263158

## 90 0.8000000 0.7789474 0.7789474 0.7578947 0.7684211 0.7684211 0.8105263

## 91 0.8210526 0.8421053 0.8526316 0.8421053 0.8210526 0.8315789 0.8526316

## 92 0.8105263 0.8315789 0.8105263 0.8000000 0.8105263 0.7789474 0.8000000

## 93 0.8105263 0.8210526 0.8105263 0.8105263 0.8210526 0.8315789 0.8105263

## 94 0.7263158 0.7368421 0.7578947 0.7473684 0.7473684 0.7894737 0.7368421

## 95 0.8315789 0.8315789 0.8315789 0.8315789 0.8315789 0.8421053 0.8210526

## 96 0.8842105 0.8947368 0.8842105 0.9052632 0.8947368 0.8736842 0.8947368

## 97 0.8000000 0.8210526 0.8000000 0.8000000 0.8315789 0.8210526 0.8210526

## 98 0.8631579 0.8631579 0.8631579 0.8631579 0.8315789 0.8526316 0.8421053

## 99 0.9052632 0.8842105 0.8631579 0.8842105 0.8736842 0.8842105 0.8631579

## 100 0.9052632 0.8947368 0.8842105 0.8947368 0.9157895 0.8947368 0.9157895

## 101 0.8000000 0.8000000 0.7894737 0.7894737 0.8210526 0.7789474 0.7894737

## 102 0.8631579 0.8421053 0.8526316 0.8105263 0.8526316 0.8631579 0.8315789

## 103 0.8947368 0.8947368 0.9052632 0.8842105 0.9052632 0.8842105 0.8842105

## 104 0.8000000 0.7684211 0.8315789 0.8210526 0.8315789 0.7894737 0.7894737

## 105 0.8947368 0.8947368 0.8947368 0.9052632 0.8736842 0.8947368 0.8947368

## 106 0.9157895 0.9263158 0.8842105 0.9052632 0.9368421 0.8842105 0.9263158

## loop13 loop14 loop15 loop16 loop17 loop18 loop19

## 1 0.9473684 0.9578947 0.9368421 0.9473684 0.9263158 0.9473684 0.9473684

## 2 0.9578947 0.9473684 0.9789474 0.9578947 0.9578947 0.9368421 0.9578947

## 3 0.9578947 0.9578947 0.9578947 0.9684211 0.9473684 0.9578947 0.9684211

## 4 0.9473684 0.9578947 0.9578947 0.9578947 0.9578947 0.9473684 0.9473684

## 5 0.9578947 0.9473684 0.9473684 0.9473684 0.9578947 0.9684211 0.9473684

## 6 0.7894737 0.7894737 0.7894737 0.7894737 0.8210526 0.7789474 0.8315789

## 7 0.7894737 0.8210526 0.8000000 0.8105263 0.8210526 0.8105263 0.8210526

## 8 0.6947368 0.6842105 0.6947368 0.6842105 0.6947368 0.6947368 0.7052632

## 9 0.7894737 0.8105263 0.8105263 0.7894737 0.8000000 0.8315789 0.8000000

## 10 0.8526316 0.8421053 0.8421053 0.8526316 0.8315789 0.8421053 0.8631579

## 11 0.7684211 0.7894737 0.7684211 0.7684211 0.7684211 0.7789474 0.7894737

## 12 0.8315789 0.9157895 0.8631579 0.8526316 0.8842105 0.8526316 0.8526316

## 13 0.7578947 0.7789474 0.7684211 0.7894737 0.8105263 0.7263158 0.7684211

## 14 0.8421053 0.8421053 0.8210526 0.8421053 0.8105263 0.8526316 0.8210526

## 15 0.7789474 0.8210526 0.8000000 0.8000000 0.8000000 0.8000000 0.7684211

## 16 0.7684211 0.8210526 0.7578947 0.7684211 0.8105263 0.8000000 0.8000000

## 17 0.8315789 0.8631579 0.8105263 0.8631579 0.8421053 0.8421053 0.8421053

## 18 0.8210526 0.8000000 0.8105263 0.7894737 0.8105263 0.8000000 0.8000000

## 19 0.7052632 0.7052632 0.6842105 0.7157895 0.7263158 0.7157895 0.6947368

## 20 0.8315789 0.8631579 0.8421053 0.8421053 0.8421053 0.8421053 0.8526316

## 21 0.6842105 0.6842105 0.6526316 0.6842105 0.6526316 0.6842105 0.6842105

## 22 0.6210526 0.5789474 0.6210526 0.6210526 0.6105263 0.6105263 0.6315789

## 23 0.8210526 0.8105263 0.8315789 0.8210526 0.8315789 0.8421053 0.8315789

## 24 0.8210526 0.8631579 0.8315789 0.8315789 0.8315789 0.8526316 0.8315789

## 25 0.8842105 0.9157895 0.8947368 0.8842105 0.8736842 0.9263158 0.8947368

## 26 0.7894737 0.8000000 0.8210526 0.7789474 0.8210526 0.7894737 0.8000000

## 27 0.7684211 0.7578947 0.7263158 0.7263158 0.7473684 0.7578947 0.7263158

## 28 0.6736842 0.6631579 0.6736842 0.6947368 0.6210526 0.6631579 0.6842105

## 29 0.7263158 0.7157895 0.7052632 0.7052632 0.7263158 0.7368421 0.7263158

## 30 0.6631579 0.7157895 0.7052632 0.6947368 0.7052632 0.7052632 0.7157895

## 31 0.6947368 0.6631579 0.6736842 0.6421053 0.6631579 0.6526316 0.6526316

## 32 0.8315789 0.8315789 0.8105263 0.7894737 0.8315789 0.8526316 0.8421053

## 33 0.8736842 0.8842105 0.8842105 0.8842105 0.9052632 0.9052632 0.8947368

## 34 0.8526316 0.8631579 0.8842105 0.8842105 0.8631579 0.8842105 0.8631579

## 35 0.8315789 0.8315789 0.8315789 0.8105263 0.8421053 0.8315789 0.8315789

## 36 0.8526316 0.8736842 0.8526316 0.8315789 0.8421053 0.8421053 0.8315789

## 37 0.8421053 0.8000000 0.8315789 0.8210526 0.8421053 0.8421053 0.8526316

## 38 0.8000000 0.8210526 0.8210526 0.8000000 0.8421053 0.8421053 0.8105263

## 39 0.8315789 0.8210526 0.8421053 0.8421053 0.8421053 0.8421053 0.8421053

## 40 0.7894737 0.8105263 0.8000000 0.8000000 0.7789474 0.7894737 0.7894737

## 41 0.8631579 0.8631579 0.8526316 0.8631579 0.8631579 0.8526316 0.8526316

## 42 0.8947368 0.8947368 0.9157895 0.8947368 0.9157895 0.9157895 0.9263158

## 43 0.8421053 0.8421053 0.8421053 0.8526316 0.8526316 0.8736842 0.8631579

## 44 0.8736842 0.8947368 0.8842105 0.8526316 0.8947368 0.8736842 0.8842105

## 45 0.8421053 0.7789474 0.8000000 0.8105263 0.8105263 0.8105263 0.7894737

## 46 0.7684211 0.7473684 0.7578947 0.7263158 0.7473684 0.7684211 0.7473684

## 47 0.7263158 0.7157895 0.7263158 0.7368421 0.7368421 0.7473684 0.7473684

## 48 0.7473684 0.7578947 0.7789474 0.7894737 0.7684211 0.7684211 0.8000000

## 49 0.8000000 0.7789474 0.7578947 0.8105263 0.8105263 0.8315789 0.8105263

## 50 0.7578947 0.7368421 0.7263158 0.7368421 0.7578947 0.7473684 0.7684211

## 51 0.8000000 0.8105263 0.8105263 0.7894737 0.7894737 0.7894737 0.7894737

## 52 0.7473684 0.7684211 0.7684211 0.7473684 0.7789474 0.7684211 0.7473684

## 53 0.8421053 0.8210526 0.8421053 0.8210526 0.8210526 0.8105263 0.8315789

## 54 0.8210526 0.8105263 0.8105263 0.8315789 0.8105263 0.8315789 0.7894737

## 55 0.6736842 0.7263158 0.6842105 0.7157895 0.6947368 0.7263158 0.7263158

## 56 0.8315789 0.8105263 0.8105263 0.8105263 0.8210526 0.8210526 0.8105263

## 57 0.7368421 0.7684211 0.7473684 0.7578947 0.7473684 0.7578947 0.7789474

## 58 0.8526316 0.8315789 0.8421053 0.8631579 0.8421053 0.8631579 0.8526316

## 59 0.8210526 0.8105263 0.8000000 0.8210526 0.7789474 0.8315789 0.8105263

## 60 0.7473684 0.7578947 0.7894737 0.7684211 0.7578947 0.7473684 0.7894737

## 61 0.8315789 0.8421053 0.7789474 0.8105263 0.8105263 0.8105263 0.8105263

## 62 0.7789474 0.7894737 0.8105263 0.7789474 0.8000000 0.8000000 0.8000000

## 63 0.8105263 0.8000000 0.8105263 0.8000000 0.8105263 0.8210526 0.8105263

## 64 0.8631579 0.8631579 0.8631579 0.8631579 0.8631579 0.8947368 0.8736842

## 65 0.8842105 0.8736842 0.9157895 0.9052632 0.8947368 0.8842105 0.8947368

## 66 0.8210526 0.8315789 0.8315789 0.8421053 0.8000000 0.8421053 0.8315789

## 67 0.8526316 0.8105263 0.8210526 0.8000000 0.8315789 0.8105263 0.8210526

## 68 0.8315789 0.8315789 0.8631579 0.8210526 0.8315789 0.8631579 0.8315789

## 69 0.7473684 0.7263158 0.7473684 0.7263158 0.7263158 0.7368421 0.7263158

## 70 0.7263158 0.7052632 0.6842105 0.7052632 0.7263158 0.7157895 0.7473684

## 71 0.7157895 0.6842105 0.7263158 0.7052632 0.7052632 0.6736842 0.6947368

## 72 0.7157895 0.6947368 0.6631579 0.6947368 0.6947368 0.7157895 0.7052632

## 73 0.8421053 0.8315789 0.8210526 0.8210526 0.8315789 0.8631579 0.8315789

## 74 0.8631579 0.8315789 0.8631579 0.8526316 0.8315789 0.8526316 0.8421053

## 75 0.8736842 0.8736842 0.8421053 0.8000000 0.8736842 0.8736842 0.8631579

## 76 0.7578947 0.7684211 0.7789474 0.7789474 0.7789474 0.7684211 0.8000000

## 77 0.6842105 0.6947368 0.6947368 0.7052632 0.7052632 0.7157895 0.6842105

## 78 0.6631579 0.7052632 0.7157895 0.7052632 0.6736842 0.6631579 0.7157895

## 79 0.6842105 0.7052632 0.6842105 0.6736842 0.7263158 0.7157895 0.7263158

## 80 0.7789474 0.7894737 0.7578947 0.7368421 0.7368421 0.7263158 0.7368421

## 81 0.9157895 0.8947368 0.8842105 0.8947368 0.9052632 0.8842105 0.9052632

## 82 0.7578947 0.7789474 0.7684211 0.7684211 0.7684211 0.7578947 0.7894737

## 83 0.7789474 0.7473684 0.7368421 0.7473684 0.7578947 0.7263158 0.7368421

## 84 0.8631579 0.8421053 0.8842105 0.8631579 0.8210526 0.8736842 0.8631579

## 85 0.7368421 0.7368421 0.7578947 0.7368421 0.7368421 0.7684211 0.7052632

## 86 0.6631579 0.6421053 0.6526316 0.6421053 0.6631579 0.6631579 0.6315789

## 87 0.6842105 0.7052632 0.7052632 0.7052632 0.7263158 0.7157895 0.6947368

## 88 0.7368421 0.7157895 0.7368421 0.7578947 0.7263158 0.7473684 0.7473684

## 89 0.7578947 0.7368421 0.7789474 0.7368421 0.7473684 0.7052632 0.7263158

## 90 0.7789474 0.7789474 0.7894737 0.7789474 0.7894737 0.8105263 0.7894737

## 91 0.8736842 0.8210526 0.8631579 0.8526316 0.8631579 0.8210526 0.8631579

## 92 0.8105263 0.8105263 0.7894737 0.8000000 0.8105263 0.8000000 0.8315789

## 93 0.8000000 0.8421053 0.8210526 0.8210526 0.8105263 0.8210526 0.8315789

## 94 0.7473684 0.7789474 0.7789474 0.7578947 0.7684211 0.7473684 0.7473684

## 95 0.8421053 0.7894737 0.8526316 0.8210526 0.8210526 0.8105263 0.8526316

## 96 0.8842105 0.8631579 0.8736842 0.8631579 0.8842105 0.8842105 0.8842105

## 97 0.8210526 0.8105263 0.8315789 0.8210526 0.7789474 0.8000000 0.8421053

## 98 0.8736842 0.8842105 0.8842105 0.8526316 0.8421053 0.8842105 0.8736842

## 99 0.8842105 0.8947368 0.8947368 0.8947368 0.8736842 0.8947368 0.8421053

## 100 0.8842105 0.8947368 0.9052632 0.8842105 0.9052632 0.8736842 0.9157895

## 101 0.8000000 0.8105263 0.8105263 0.8105263 0.7789474 0.8210526 0.8000000

## 102 0.8421053 0.8315789 0.8210526 0.8526316 0.8631579 0.8315789 0.8526316

## 103 0.8842105 0.8947368 0.8842105 0.8947368 0.9052632 0.8842105 0.8947368

## 104 0.8421053 0.7789474 0.8105263 0.8000000 0.7578947 0.8210526 0.8000000

## 105 0.8842105 0.8947368 0.8842105 0.8947368 0.8947368 0.8947368 0.8842105

## 106 0.9052632 0.9052632 0.9263158 0.9157895 0.9052632 0.9052632 0.9052632

## loop20 mean

## 1 0.9473684 0.9415789

## 2 0.9473684 0.9536842

## 3 0.9789474 0.9610526

## 4 0.9684211 0.9610526

## 5 0.9473684 0.9542105

## 6 0.8315789 0.7989474

## 7 0.8105263 0.8131579

## 8 0.6947368 0.6947368

## 9 0.8105263 0.8031579

## 10 0.8526316 0.8457895

## 11 0.7684211 0.7773684

## 12 0.8736842 0.8578947

## 13 0.7684211 0.7752632

## 14 0.8000000 0.8352632

## 15 0.8315789 0.8000000

## 16 0.7894737 0.7894737

## 17 0.8421053 0.8442105

## 18 0.7578947 0.7984211

## 19 0.6947368 0.6978947

## 20 0.8421053 0.8378947

## 21 0.6736842 0.6789474

## 22 0.6105263 0.6094737

## 23 0.8210526 0.8289474

## 24 0.8421053 0.8405263

## 25 0.9157895 0.8952632

## 26 0.8105263 0.8047368

## 27 0.7473684 0.7421053

## 28 0.6421053 0.6610526

## 29 0.7263158 0.7221053

## 30 0.6947368 0.7010526

## 31 0.6736842 0.6668421

## 32 0.8210526 0.8405263

## 33 0.8947368 0.8857895

## 34 0.8526316 0.8673684

## 35 0.8105263 0.8242105

## 36 0.8631579 0.8557895

## 37 0.8421053 0.8252632

## 38 0.8421053 0.8147368

## 39 0.8421053 0.8389474

## 40 0.7894737 0.7984211

## 41 0.8631579 0.8505263

## 42 0.9052632 0.9110526

## 43 0.8631579 0.8484211

## 44 0.8842105 0.8826316

## 45 0.7894737 0.8089474

## 46 0.7684211 0.7526316

## 47 0.7368421 0.7278947

## 48 0.7789474 0.7673684

## 49 0.8210526 0.7978947

## 50 0.7473684 0.7505263

## 51 0.8000000 0.8047368

## 52 0.7684211 0.7547368

## 53 0.8210526 0.8278947

## 54 0.8105263 0.8142105

## 55 0.7263158 0.7115789

## 56 0.8210526 0.8173684

## 57 0.7684211 0.7557895

## 58 0.8736842 0.8515789

## 59 0.8421053 0.8105263

## 60 0.7157895 0.7621053

## 61 0.8105263 0.8163158

## 62 0.7789474 0.7978947

## 63 0.8000000 0.8084211

## 64 0.8631579 0.8673684

## 65 0.8842105 0.8968421

## 66 0.8210526 0.8273684

## 67 0.8000000 0.8194737

## 68 0.8631579 0.8463158

## 69 0.7263158 0.7373684

## 70 0.6947368 0.7147368

## 71 0.7368421 0.7042105

## 72 0.6947368 0.6910526

## 73 0.8421053 0.8310526

## 74 0.8421053 0.8478947

## 75 0.8105263 0.8552632

## 76 0.7789474 0.7836842

## 77 0.7368421 0.7000000

## 78 0.6842105 0.6878947

## 79 0.7157895 0.7000000

## 80 0.7894737 0.7552632

## 81 0.8842105 0.8942105

## 82 0.7789474 0.7805263

## 83 0.7473684 0.7468421

## 84 0.8315789 0.8521053

## 85 0.7684211 0.7426316

## 86 0.6526316 0.6542105

## 87 0.7157895 0.7047368

## 88 0.7473684 0.7447368

## 89 0.7263158 0.7426316

## 90 0.8000000 0.7868421

## 91 0.8842105 0.8500000

## 92 0.8000000 0.8042105

## 93 0.8315789 0.8194737

## 94 0.7684211 0.7557895

## 95 0.8315789 0.8300000

## 96 0.8736842 0.8805263

## 97 0.8421053 0.8147368

## 98 0.8526316 0.8552632

## 99 0.8842105 0.8810526

## 100 0.8947368 0.8989474

## 101 0.8000000 0.8005263

## 102 0.8421053 0.8410526

## 103 0.8842105 0.8910526

## 104 0.8315789 0.8031579

## 105 0.8736842 0.8894737

## 106 0.8947368 0.9126316The link of the label.csv:https://reurl.cc/e5ejOQ